At NVIDIA GTC, Lenovo announced an expanded set of Hybrid AI Advantage solutions developed with NVIDIA, positioning the company to address growing demand for real-time AI inferencing across enterprise and cloud environments.

The update reflects a broader shift in the AI market. As organizations move beyond model training, inferencing, or the process of running AI models in real-world scenarios, is becoming the primary driver of business value. Lenovo is aligning its infrastructure portfolio to support that transition across edge, data center, and cloud deployments.

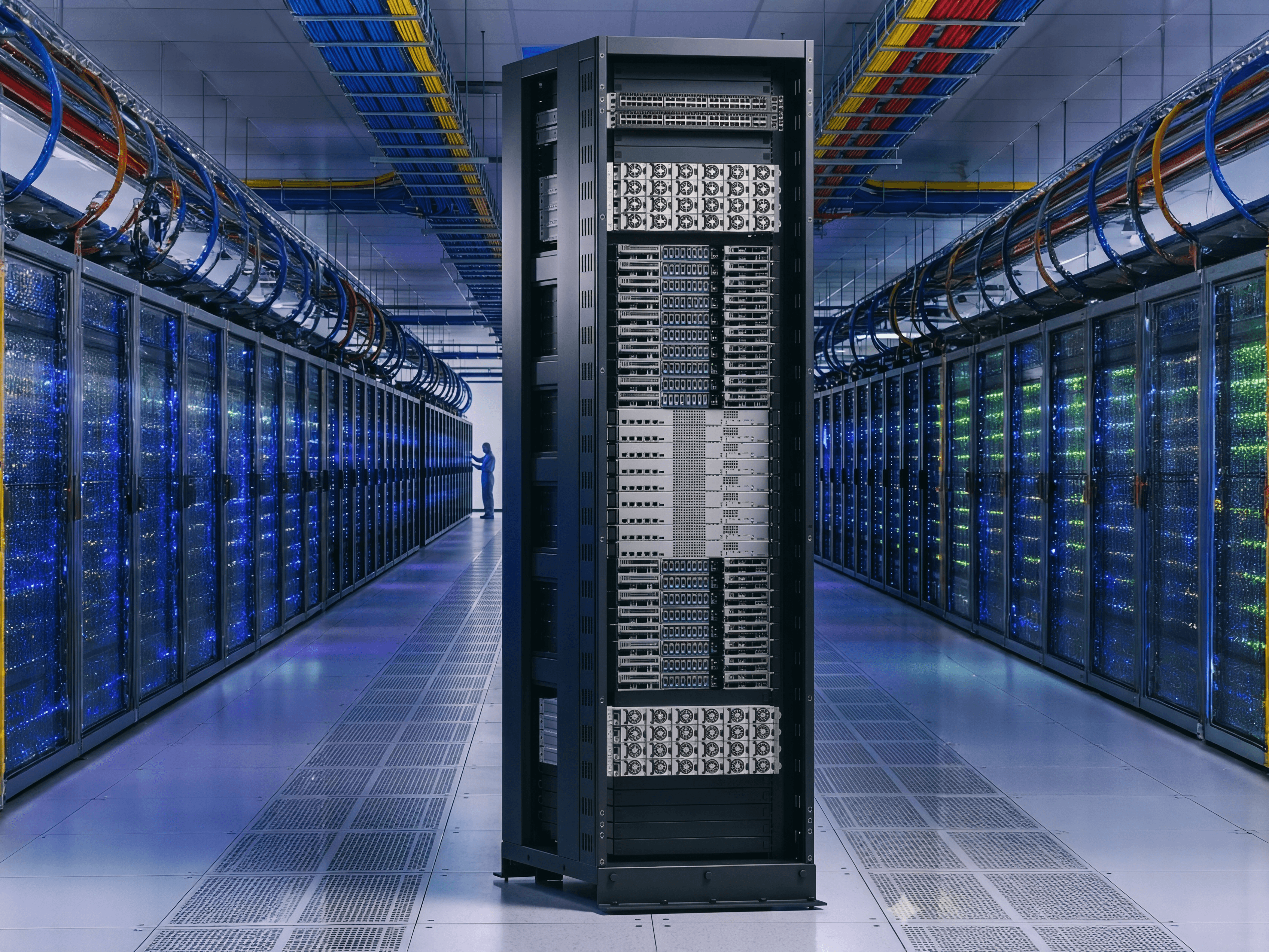

The company said its Hybrid AI Advantage platform now spans from individual workstations to what it describes as gigawatt-scale AI factories. The goal is to reduce time-to-first-token, improve cost efficiency, and enable real-time decision-making across industries.

From development to production AI

Lenovo’s latest announcement focuses on operationalizing AI, moving workloads from experimentation into production environments. This includes new AI inferencing platforms built with NVIDIA software such as Dynamo and NIM, alongside infrastructure designed for large-scale deployments.

The company is also extending AI capabilities to end-user systems. New mobile and desktop workstations powered by NVIDIA RTX Pro Blackwell GPUs are designed to handle local AI development and inferencing. These include updated ThinkPad and ThinkStation models, as well as a dedicated AI developer device capable of supporting models with up to 200 billion parameters.

This reflects a hybrid approach where AI workloads are distributed. Instead of relying entirely on cloud infrastructure, enterprises can run AI locally for lower latency, improved data control, and reduced costs.

Enterprise infrastructure and cost positioning

Lenovo is positioning its Hybrid AI Advantage as a cost-efficient alternative to cloud-heavy deployments. The company claims its solutions can deliver returns on investment in under six months and reduce cost per token by up to eight times compared to comparable cloud infrastructure offerings.

New ThinkSystem and ThinkEdge servers are optimized for inferencing workloads across sectors such as retail, manufacturing, healthcare, and smart cities. These systems integrate NVIDIA AI Enterprise software and support a range of configurations, from single-node deployments to multi-modal AI platforms.

Lenovo is also working with partners including IBM, Nutanix, Cloudian, and Veeam to provide validated enterprise stacks. The focus is on simplifying deployment while maintaining security and scalability for production AI environments.

Industry-specific AI and edge deployment

Beyond infrastructure, Lenovo is expanding its AI solutions library with industry-focused applications. These include real-time analytics for sports, in-store AI assistants for retail, and robotics-driven automation for manufacturing and mobility.

The company is also pushing edge AI use cases. Its Auto AI Box platform targets vehicle-based computing, supporting applications such as advanced driver assistance and predictive maintenance.

This aligns with broader enterprise demand for localized AI processing, particularly in environments where latency and data sovereignty are critical.

Scaling toward AI cloud “factories”

At the high end, Lenovo is preparing for large-scale AI cloud deployments using NVIDIA’s next-generation Vera Rubin platform. As a launch partner for the NVL72 system, Lenovo is developing liquid-cooled, rack-scale infrastructure designed for hyperscale and sovereign AI cloud providers.

The company claims these systems can deliver up to ten times higher throughput and significantly lower cost per token compared to previous generations, though detailed benchmarks were not disclosed.

This positions Lenovo within the emerging concept of AI factories, where large-scale infrastructure is dedicated to continuous AI inference and deployment rather than periodic model training.

Market context

Lenovo’s announcement highlights a key transition in enterprise AI. Training large models remains important, but the commercial value is increasingly tied to how efficiently those models can be deployed and run in production.

By combining on-device AI, edge computing, and large-scale infrastructure, Lenovo is aiming to offer a full-stack approach that competes with cloud-first providers. The success of this strategy will depend on execution, particularly around ease of deployment, software integration, and real-world cost savings.

More details and product demonstrations were presented during NVIDIA GTC, with Lenovo positioning its Hybrid AI Advantage as a framework for scaling AI from pilot projects to full production environments.